It took a New Orleans street magician only 20 minutes and $1 to create audio that sounded like President Joe Biden discouraging Democrats from voting in the New Hampshire primary.

The robocall was found to be a product of artificial intelligence, and soon after, the Federal Communications Commission banned AI-generated voices in robocalls.

But identifying AI-generated audio in the wild is easier said than done.

We tested four free online tools that claim to determine whether an audio clip is AI-generated. Only one of them signaled that the Biden-like robocall was likely AI-generated. Experts told us that AI audio detection tools are lacking in accuracy and shouldn’t be trusted on their own. Nevertheless, people can employ other techniques to spot potential misinformation.

“Audio deepfakes may be more challenging to detect than image or video deepfakes,” said Manjeet Rege, director of the Center for Applied Artificial Intelligence at the University of St. Thomas. “Audio lacks the full context and visual cues of video, so believable audio alone is easier to synthesize convincingly.”

The challenge of identifying audio deepfakes

Many people have called a business or government agency and heard an automatic response from a synthetic voice.

But only recently did people start using the technology to create deepfakes, said Siwei Lyu, a computer science and engineering professor at the University at Buffalo.

Lyu said audio deepfakes typically fall under two types: text-to-speech and voice conversion. The biggest difference between the two, he said, is the input. Text-to-speech systems enable computers to convert text into what sounds like a spoken voice. Voice conversion, by contrast, will take a person’s voice and manipulate it so that it sounds like another person’s voice, retaining the emotion and inhalation patterns of the original speech.

Creating deepfake audio is an attractive alternative to creating deepfake video because audio is easier and cheaper to produce.

For example, online startup ElevenLabs, offers a free plan to people seeking to convert text to speech. The startup has gained prominence in the industry and was recently valued at $1.1 billion after raising $80 million in venture capital funding. Its paid plans start at $1 per month and its products also include voice cloning, which allows users to create synthetic copies of their own voices, and a tool that can help people classify whether something is AI-generated.

“The rise of audio deepfakes opens up disturbing possibilities for spreading misinformation,” Rege said. Aside from making it possible for machines to impersonate public figures, politicians and celebrities, falsified audio could also be used to trick security systems that make use of voice authentication, he said. For example, a Vice reporter in February 2023 demonstrated how he was able to trick his bank’s authentication system by calling its service line and playing clips of his AI-powered cloned voice.

Rege warned that fake audio could have implications for court cases, intelligence operations and politics. Some potential scenarios:

- Using fake audio recordings to prompt authorities to pursue false intelligence targets.

- Submitting fake audio recordings of defendants confessing to crimes or making incriminating statements

- Impersonating public officials’ voices to spread disinformation or obtain sensitive information

Rege and Lyu said synthetic audio is created using “deep learning” technology that trains AI models to learn characteristics of speech based on a large dataset of diverse speakers, voices and conversations. With this information, the technology can recreate speech.

PolitiFact identified TikTok and YouTube accounts that uploaded videos that perpetuated false narratives about the 2024 election using audio that expert analysis showed was generated by AI.

Because audio is one-dimensional and more ephemeral than images and videos, Lyu said, it’s harder to determine when it’s not real; this makes it more effective at misleading people. It’s harder to review a piece of audio to check for signs of AI generation. You can pause a video or inspect an image you encounter online. But if you pick up a call, you might not realize you’re listening to AI-generated audio or get to record it. Without a digital copy, it would be hard to analyze the audio.

Detection tools fall short

With audio deepfake technology evolving quickly, the tools designed to detect them are struggling to keep up.

“Detecting audio deepfakes is an active research area, meaning that it is currently treated as an unsolved problem,” said Jennifer Williams, a lecturer at the University of Southampton who specializes in audio AI safety.

Many online tools that claim to detect AI generated voices are available only with a paid subscription or upon demo request. Others ask customers to send the audio file to an email address.

We looked for free options.

V.S. Subrahmanian, a Northwestern University computer science professor, launched his own AI audio detection experiment at the Northwestern Security & AI Lab, which he leads. The group tested 14 off-the-shelf or free, publicly available audio deepfake detection tools, he said. The research is not yet publicly available, but he said the results were discouraging.

“You cannot rely on audio deepfake detectors today and I cannot recommend one for use,” Subrahmanian said.

We persevered, anyway, and found three free tools: ElevenLabs’ Speech Classifier, AI or Not and PlayHT. We also tested the DeepFake-O-Meter, which was developed by the University at Buffalo Media Forensic Lab, which Lyu heads.

For our experiment, we obtained a copy of the fake Biden robocall from the New Hampshire attorney general’s office and ran it through the four tools.

In the robocall that circulated before the Jan. 23 New Hampshire primary, a Biden-like voice told Democratic voters that voting in the primary “only enables the Republicans in their quest to elect Donald Trump again.” It encouraged people not to vote until November. Soon after, security software company Pindrop said it found a 99% likelihood that the audio was created using ElevenLabs — a finding that magician Paul Carpenter later confirmed, telling NBC News that it took him less than 20 minutes and $1 to make.

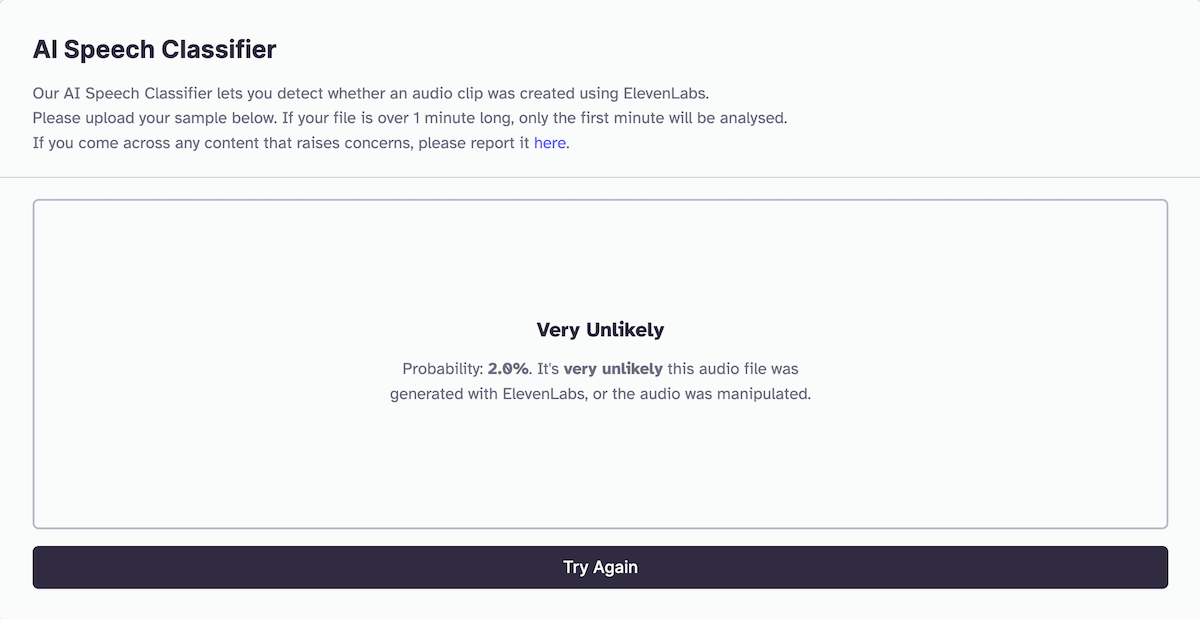

ElevenLabs has its own AI speech classifier — released in 2023, before the robocall circulated — that measures the likelihood of an audio clip being created using its system. We uploaded the audio clip we obtained from the New Hampshire attorney general’s office to ElevenLabs’ speech classifier. The result? It found a 2% probability — “very unlikely” — that the audio was created with ElevenLabs.

(Screenshot/ElevenLabs)

It’s unclear why ElevenLabs returned such a low result. Pindrop also experimented using ElevenLabs’ tool and said it returned an 84% probability score that the audio was created using ElevenLabs. Lyu said audio file compression and other factors can destroy signatures or features that detectors use to detect AI generation. And we do not know whether Pindrop used the same audio file. (Pindrop has its own audio deepfake detection system, available upon demo request.)

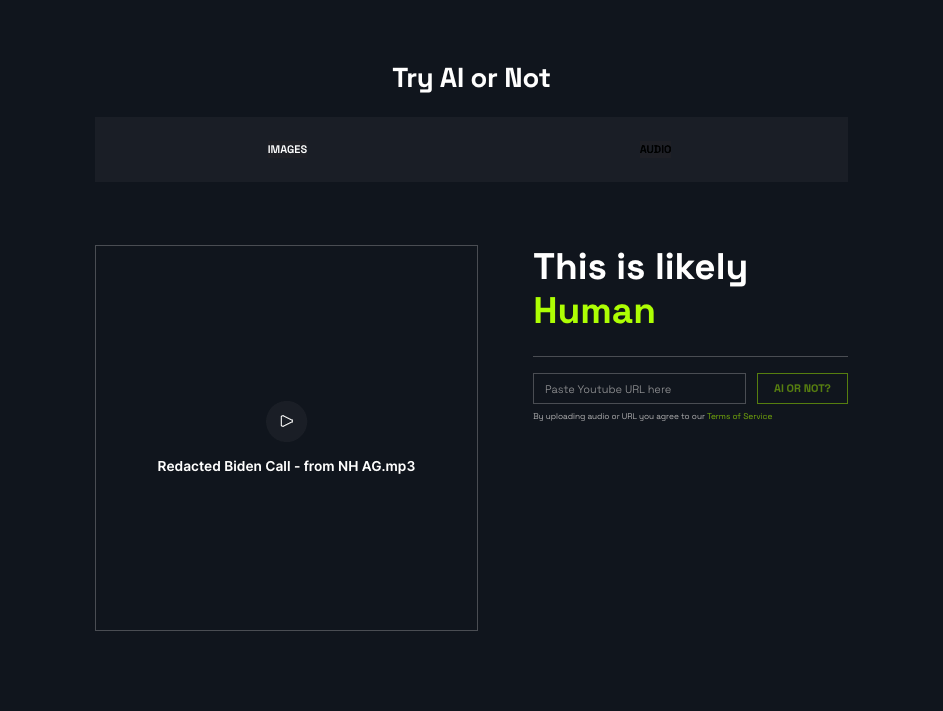

We did the same test using AI or Not, a tool American tech company Optic developed. The result said the audio clip was “likely human.”

Anatoly Kvitnitsky, AI or Not’s chief executive, told Politifact that the audio sample “had a lot of noise associated with it,” making its AI origins less able to be detected without linguistic experts. “AI was only confirmed when the creator of the recording admitted to it being AI,” Kvitnitsky said.

(Screenshot/AI or Not)

We also tested the audio using PlayHT, but it displayed an error message every time we upload the fake Biden audio.

(Screenshot/PlayHT)

We contacted ElevenLabs and PlayHT for comment but did not hear back.

Lyu said deepfake audio detection has fewer available services than images and videos. He said this is partly because deepfake images and videos were developed earlier.

Rege said although researchers have also developed open-source tools, the tools’ accuracy remains to be seen.

“I would say no single tool is considered fully reliable yet for the general public to detect deepfake audio,” Rege said. “A combined approach using multiple detection methods is what I will advise at this stage.”

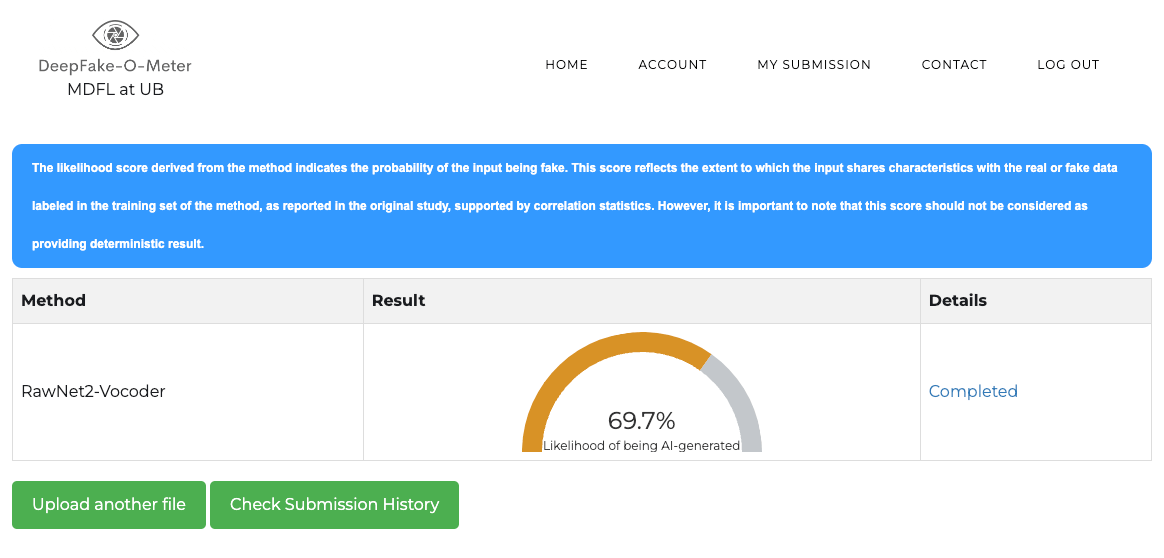

The University at Buffalo Media Forensic Lab’s DeepFake-O-Meter has not officially launched, Lyu said, but people need only create an account to use it for free. The DeepFake-O-Meter returned a 69.7% likelihood score of the Biden audio being AI-generated — the most accurate result among the tools we’ve tested.

(Screenshot/DeepFake-O-Meter)

What to listen for in potential audio deepfakes

Subrahmanian said audio calls requesting money, personal information, passwords, bank codes or two factor authentication codes “should be treated with extreme caution” and warned people never to give such information over the phone.

“Urgency is a key giveaway,” he said, “Scammers want you (to) do things immediately, before you have time to consult others or think more deeply about a request. Don’t fall for it.”

Lyu and Rege said people should watch for signs of AI-generated audio, including irregular or absent breathing noises, intentional pauses and intonations, along with inconsistent room acoustics.

They also said users should seek to verify the audio’s sources and cross-check the facts.

“Be skeptical of unsolicited audio messages or recordings, especially those claiming to be from authority figures, celebrities, or people you know,” Rege said.

Use common sense approaches and ask questions like who or where the call came from and whether it is supported by independent and unrelated sources, Lyu said.

In an interview with Scientific American, Hany Farid, a University of California, Berkeley, computer science professor, also stressed provenance — or basic, trustworthy facts about a piece’s origins — when analyzing audio recordings: “Where was it recorded? When was it recorded? Who recorded it? Who leaked it to the site that originally posted it?”

When it comes to legal matters, financial transactions, or important events, Rege said, people can protect themselves by insisting on verifying identities through other secure channels beyond audio or voice.

“Healthy skepticism is warranted given how realistic this emerging technology has become,” Rege said.

This fact check was originally published by PolitiFact, which is part of the Poynter Institute. See the sources for this fact check here.